DreamServer and the Return of Owning Your AI

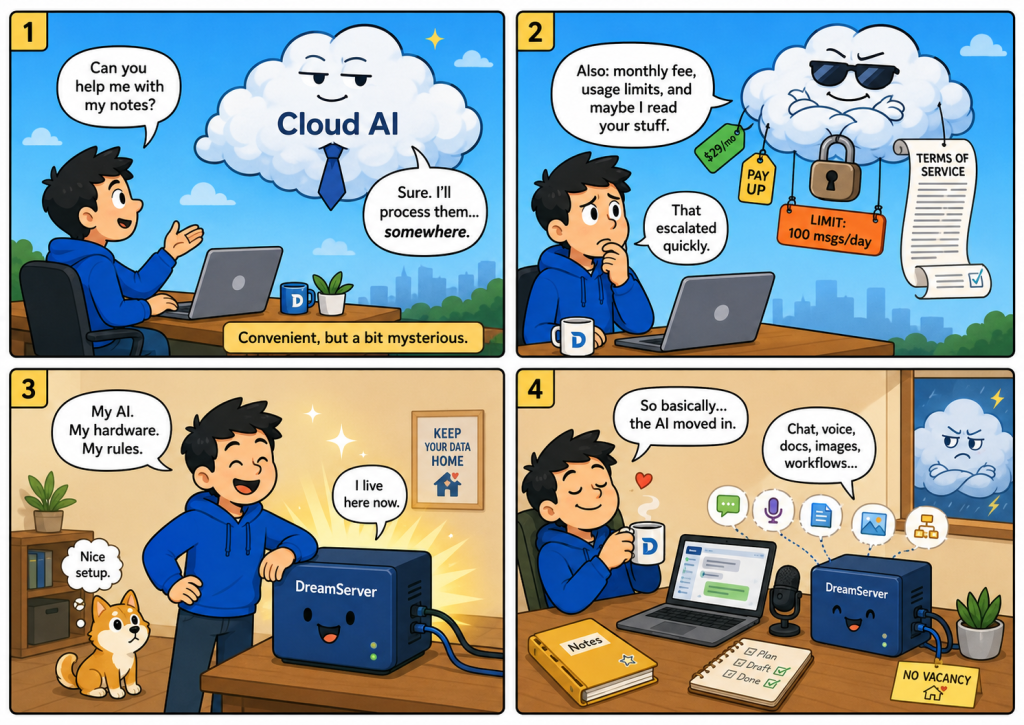

For the last few years, artificial intelligence has mostly felt like something we rent. We open a browser, type into a chat box, and somewhere far away a company’s servers do the thinking. That arrangement is convenient, powerful, and for many people perfectly acceptable. But it also comes with a quiet trade-off: your prompts leave your machine, your usage depends on someone else’s infrastructure, and your access can be priced, throttled, changed, or removed by a provider you do not control. DreamServer is built around a different idea: what if a complete AI system could live on your own hardware?

The project, developed by Light Heart Labs and published on GitHub under the Apache 2.0 license, describes itself as a local-first AI stack for inference, chat, voice, agents, workflows, retrieval-augmented generation, image generation, privacy tools, and observability. In plain English, it is not just another chatbot. It is closer to a self-hosted AI workstation: one package that tries to bring together many of the things people currently use separate cloud services for.

That distinction matters. Running a local language model is no longer exotic. Tools such as Ollama, Open WebUI, llama.cpp, ComfyUI and Qdrant have already made local AI much more approachable. But the typical local AI setup still feels like a box of parts: one tool for chat, another for images, another for document search, another for automation, another for monitoring, and then a long evening convincing them all to talk to each other. DreamServer’s promise is that the assembly work should not be the main event.

At its simplest, DreamServer gives you a web interface for chatting with a local model. But around that core it adds a wider ecosystem. Open WebUI provides the familiar browser-based chat experience; llama-server handles local model inference; LiteLLM acts as a gateway for local, cloud, or hybrid model routing; Whisper and Kokoro cover speech-to-text and text-to-speech; Qdrant and embeddings support document-based search; SearXNG and Perplexica add search and research capabilities; n8n brings workflow automation; ComfyUI adds image generation; and the dashboard keeps an eye on service health and GPU usage.

This is where the project becomes interesting for a general audience. DreamServer is not really selling the idea that everyone should become a machine-learning engineer. It is selling the opposite idea: that local AI should become appliance-like. You should be able to install it, open a browser, and use it without understanding every container, port, model file, vector database, or GPU backend underneath.

The installation process reflects that ambition. The project documentation says the installer detects your GPU, selects an appropriate model tier, checks dependencies, generates credentials, asks which optional components you want, and starts the required services. It also includes a “bootstrap” mode that downloads a smaller model first so the user can begin chatting while the larger model continues downloading in the background.

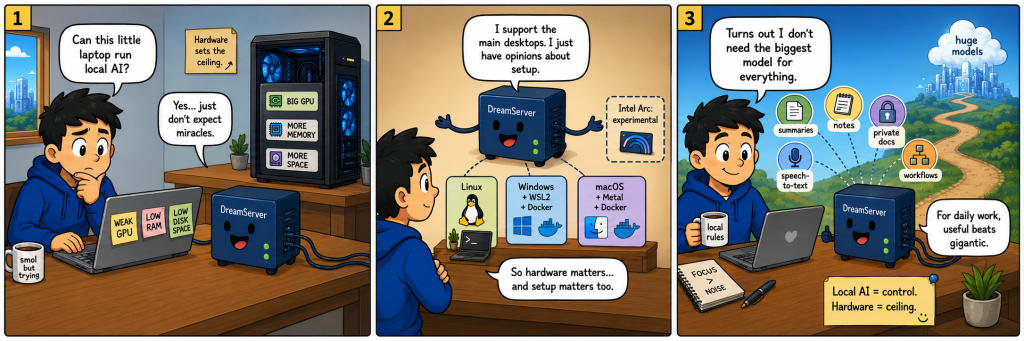

Hardware is still the hard limit. DreamServer is not magic. If your machine has a weak GPU, limited memory, or little free disk space, you will not get the same experience as someone running a high-end NVIDIA card, AMD Strix Halo system, Apple Silicon machine with generous unified memory, or enterprise GPU. The support matrix currently lists Linux, Windows and macOS as supported, with Intel Arc described as experimental. Windows relies on Docker Desktop with WSL2, while macOS uses native Metal acceleration for llama-server and Docker for the remaining services.

That trade-off is central to the whole local AI movement. Cloud AI gives you huge models without owning huge hardware. Local AI gives you control, but your own machine becomes the ceiling. For a casual user, that may mean accepting a smaller model. For a developer, a small business, a researcher, or a privacy-sensitive organisation, the compromise may be worth it. Not every task needs the largest frontier model. Many everyday tasks – summarising documents, drafting internal notes, searching private knowledge bases, generating routine content, transcribing speech, automating local workflows – can be valuable even when performed by a smaller local model.

The privacy argument is obvious, but it should not be oversold. Running AI locally can reduce how much data you send to external providers. That is valuable for confidential documents, client notes, legal work, healthcare-adjacent content, internal strategy, personal archives, and anything you simply do not want processed elsewhere. But local does not automatically mean secure. A badly configured local server can become a public problem. In January 2026, SentinelLABS and Censys reported finding more than 175,000 exposed Ollama hosts across 130 countries, showing how easily self-hosted AI can become risky when placed on the open internet without proper controls.

DreamServer appears aware of this boundary. Its architecture documentation says services bind to localhost by default, and its stack includes privacy and observability components such as Privacy Shield, Token Spy and Langfuse. That is the right instinct: local AI needs boring operational hygiene as much as it needs exciting model support.

The most compelling part of DreamServer is its philosophy of integration. Open WebUI is already a strong self-hosted AI interface, designed to work offline and connect to local or cloud models. Qdrant is already a serious vector database for retrieval-augmented generation. ComfyUI is already a powerful node-based interface for image generation workflows. DreamServer’s value is not that it invented every piece. Its value is that it tries to turn the pieces into a coherent local AI environment.

That also means the project’s success will depend less on slogans and more on maintenance. A system made from many services is only as good as its updates, defaults, documentation, security posture and failure recovery. Local AI users do not want to debug twelve containers every Saturday. They want the boring miracle: it starts, it works, it updates cleanly, and it does not eat their machine alive.

For now, DreamServer feels like part of a broader shift. AI is moving in two directions at once. At one end, giant cloud platforms continue to build the biggest models, the smoothest interfaces and the deepest integrations. At the other, open models, consumer GPUs, compact inference engines and self-hosted tools are making it realistic for individuals and small teams to run useful AI on hardware they own.

DreamServer sits firmly in that second camp. It is ambitious, a little rebellious, and probably not for people who panic at the sight of Docker. But it points toward a future that feels important: AI as something you can possess, inspect, modify, switch off, extend and run under your own roof. The question is no longer whether local AI can work. It can. The real question is whether projects like DreamServer can make it feel less like a hobbyist ritual and more like a normal piece of personal or business infrastructure. That is the interesting bit. Not the chatbot. The ownership.

Link: https://github.com/Light-Heart-Labs/DreamServer